AI infrastructure costs have become one of the primary obstacles to scaling intelligent autonomous agents. Running a frontier model like Claude Opus on every single API request sounds ideal in theory, but in a production environment, the compute bill grows exponentially. That is exactly why Anthropic’s new Claude Advisor Strategy is rapidly turning heads across the developer community.

Announced on April 9, 2026, this architectural pattern pairs Opus as a strategic advisor with either Sonnet or Haiku acting as an executor. The result is near Opus-level intelligence embedded directly within your agents, delivered at a fraction of the traditional cost. In plain terms: you get the immense brainpower of Opus, but you only pay for it when it is truly needed.

In this comprehensive guide, you will learn exactly what the Claude Advisor Strategy is, how its underlying architecture functions, the technical steps to implement it via API, and why it represents a paradigm shift for engineering teams building AI-powered products.

What Is the Claude Advisor Strategy?

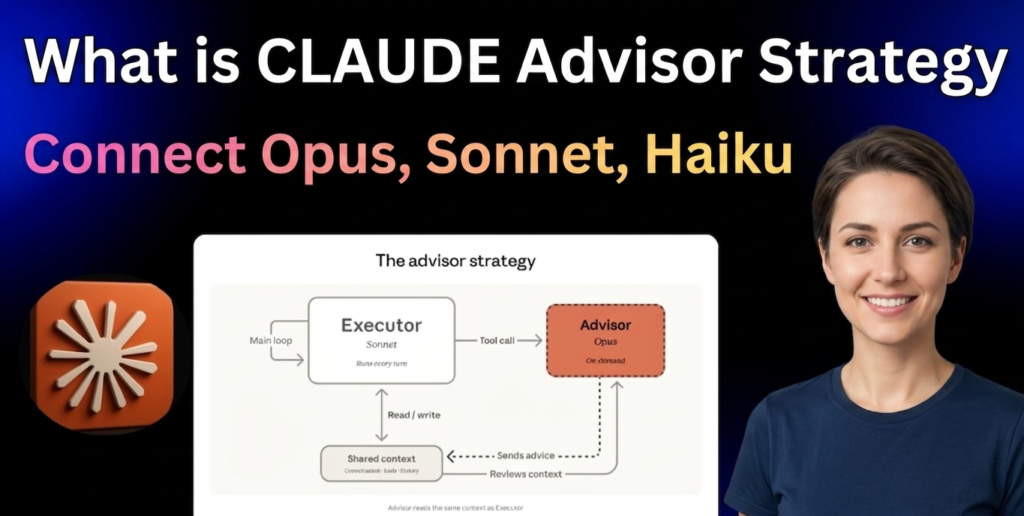

The Claude Advisor Strategy is a powerful architectural paradigm—now formalized as a native API feature—that allows developers to pair a high-intelligence model with a faster, more economical model inside a single API call.

Under this framework, Sonnet or Haiku runs the given task end-to-end. This “executor” model handles the routine work: it calls tools, processes data, reads results, and iteratively works toward a solution.

However, when the executor encounters a complex decision or edge case it cannot confidently resolve, it pauses and consults Opus for guidance.

Opus instantly accesses the shared context window and returns a concise plan, a precise course correction, or a stop signal. Once this high-level advice is delivered, the executor resumes control of the task.

Think of it like having a senior consultant on retainer. A junior engineer handles the day-to-day execution, but when a complex architectural dilemma arises, the seasoned expert steps in briefly, delivers a highly targeted recommendation, and exits. The operational cost remains impressively low, yet the final output quality stays exceptionally high.

Curious about Anthropic’s Mytho Model Leak: What Is Claude Mythos (Capybara): Anthropic’s Leaked Model

The Anthropic Advisor Strategy: Why It Matters Now

Context is critical to understanding this shift. The AI agent market reached a massive valuation of $2.5 billion in 2025. Alongside this industry growth, Anthropic’s model lineup has evolved significantly.

Since introducing the Opus, Sonnet, and Haiku naming convention in March 2024, the landscape has shifted. By February 2026, Sonnet 4.6 actually became the preferred choice over the previous-generation Opus in rigorous developer coding evaluations.

Yet, despite this rapid progression in model capability, true cost efficiency remained elusive. Relying on Claude Opus 4.6 for every API call is prohibitively expensive, especially considering the vast majority of tasks do not require its maximum reasoning capabilities.

The Anthropic Advisor Strategy solves this exact problem.

The pattern empowers developers to utilize Haiku for high-volume, low-stakes workloads and Sonnet for heavy-duty development tasks. Opus is reserved strictly for high-level architecture and critical decision-making.

Anthropic has now baked this dynamic directly into a server-side tool, making it instantly accessible with a simple configuration change. This is not merely a cost-saving hack; it reflects a fundamental evolution in how AI systems are designed—moving away from monolithic structures toward highly efficient, hierarchical architectures.

Insight: The shift toward hierarchical AI architectures mirrors human corporate structures. By delegating routine execution to faster, cheaper models and reserving frontier intelligence solely for edge cases, enterprise developers can finally scale autonomous systems without breaking their compute budgets.

Another Awesome Update by Anthropic: How to CREATE a Skill in Claude: Use Claude SKILLS 2.0 Now!

How the Advisor-Executor Architecture Works

The Inversion of the Orchestrator Pattern

Historically, complex multi-model systems relied on a massive, expensive orchestrator model to plan tasks and delegate them down to smaller worker models.

The Claude Advisor Strategy entirely flips this paradigm.

In this inverted architecture, a smaller, highly cost-effective model drives the process. It escalates issues upward without requiring complex task decomposition, a pool of worker agents, or heavy orchestration logic. Frontier-level reasoning is injected only when the executor explicitly requests it, ensuring the remainder of the execution stays at the cheaper executor-level cost.

This is a critical operational distinction. Developers no longer need to build elaborate, fragile orchestration pipelines. The executor model is self-aware enough to recognize when it is out of its depth and seamlessly reaches out to the advisor for help.

What the Advisor Does (and Doesn’t Do)

The advisor operates strictly behind the scenes. It never executes tool calls, nor does it generate user-facing output. Its sole, singular purpose is to provide internal, strategic guidance to the executor.

This strict boundary keeps the advisor’s token consumption aggressively minimized. According to Anthropic’s data, the advisor typically generates only 400 to 700 text tokens per consultation. This represents a microscopic fraction of the overall token output for a complex workflow.

Benchmark Results: Does It Actually Perform?

The benchmark data makes a compelling case for immediate enterprise adoption.

In formal evaluations, pairing Sonnet with an Opus advisor yielded a notable 2.7 percentage point increase on the SWE-bench Multilingual test compared to running Sonnet alone. Remarkably, this configuration simultaneously reduced the cost per agentic task by 11.9%. Achieving both higher performance and lower costs is a rare anomaly in AI engineering.

The performance delta is even more staggering for Haiku.

On the BrowseComp evaluation, Haiku paired with an Opus advisor achieved a score of 41.2%. This is more than double Haiku’s standalone score of 19.7%. While Haiku with an Opus advisor trails a solo Sonnet run by 29% in overall score, it costs a massive 85% less per task.

For enterprise teams managing high-volume data pipelines—such as automated customer support routing, intelligent document processing, or legal review—an 85% cost reduction coupled with acceptable intelligence trade-offs is transformative.

Real-world operators are already validating these metrics. A machine learning engineer at Eve Legal observed that on structured document extraction tasks, the advisor tool allowed Haiku 4.5 to dynamically scale its intelligence. By consulting Opus 4.6 when document complexity demanded it, the system successfully matched frontier-model quality at a remarkable five times lower cost.

How to Use Opus as Advisor in Claude (Step-by-Step)

Implementing the Claude Advisor Strategy in your application requires just three straightforward steps.

Step 1: Add the Beta Feature Header First, you must opt into the beta functionality by including the following string directly in your API request headers:

Plaintext

anthropic-beta: advisor-tool-2026-03-01

Step 2: Declare the Advisor Tool in Your API Call Next, declare advisor_20260301 within your Messages API request. The model handoff occurs seamlessly inside a single /v1/messages request, completely eliminating the need for extra round-trips or manual context management.

Here is the standard Python code structure:

Python

response = client.messages.create(

model="claude-sonnet-4-6", # executor

tools=[

{

"type": "advisor_20260301",

"name": "advisor",

"model": "claude-opus-4-6",

"max_uses": 3,

},

# ... your other tools

],

messages=[...]

)

Step 3: Tune Your System Prompt Finally, Anthropic strongly recommends reviewing their suggested system prompts tailored for specific use cases. Whether you are handling coding, document processing, or browsing tasks, these templates are available in the official documentation. You must adjust the prompt based on the specific operational environment of your agent to ensure optimal routing.

How to Connect Opus with Sonnet and Haiku in Claude

The ability to connect Opus with Sonnet and Haiku in Claude is now natively supported directly through the advisor_20260301 tool type.

Prior to this update, developers were forced to engineer custom orchestration layers, manually pass context arrays between disparate models, and manage complex, separate API calls. All of that operational friction has disappeared.

Now, the executor model autonomously decides when to invoke the advisor. When triggered, Anthropic’s backend automatically routes the curated context to the advisor model, retrieves the strategic plan, and seamlessly hands control back to the executor—all within a single, unified request.

This streamlined workflow delivers several distinct architectural advantages:

- Zero custom routing logic: There is no complex middleware to write, debug, or maintain.

- Unified context windows: Developers no longer need to juggle context limits between different models.

- Hardcoded cost controls: The

max_usesparameter firmly caps how many times the expensive advisor can be invoked per request. - Transparent billing: Advisor tokens are isolated and reported separately in the usage block, allowing granular tracking of API spend by tier.

Financially, advisor tokens are billed strictly at the advisor model’s premium rates. Executor tokens are billed at their respective lower rates. Because the advisor only generates a highly condensed plan—while the executor generates the bulk of the output at a lower rate—the aggregate cost stays substantially below deploying the advisor model for the entire session.

Real-World Use Cases for the Claude Advisor Strategy

The true value of this architecture becomes obvious when applied to complex enterprise workloads.

1. Software Development Agents

The CEO of Bolt reported that the advisor makes superior architectural decisions on complex coding tasks while adding zero overhead to simple requests. The resulting logic plans and coding trajectories are “night and day different.” For autonomous code-generation agents managing diverse repositories, this guarantees smarter decisions exactly when they are required.

2. Legal Document Processing

Legal platforms processing dense contracts, discovery files, or compliance documentation can deploy Haiku for rapid, routine data extraction. Opus is then utilized solely to flag legal ambiguities or interpret highly unusual clauses. This strategy achieves frontier-level legal analysis at a fraction of the standard price.

3. Customer Support Automation

High-volume customer support systems can safely rely on Haiku or Sonnet to resolve standard user queries. Escalation to Opus occurs only when a customer’s situation demands nuanced judgment, empathy, or complex policy interpretation. Operational costs plummet while service quality remains pristine.

4. Research and Browsing Agents

The co-founder of Genspark highlighted definitive improvements in agent turns, tool utilization, and overall success scores. The native tool actually outperformed custom planning modules they had built internally. For web research, the advisor excels at navigating the complex decision branches that typically confuse smaller models.

Google’s Big move in this AI Race: What is Google GEMMA 4 by Google DeepMind & How to Use it

Cost Breakdown: What Does the Advisor Strategy Actually Cost?

Understanding the financial impact requires looking at the actual performance-to-cost ratios.

| Configuration | Relative Cost | BrowseComp Score |

| Haiku solo | Lowest | 19.7% |

| Haiku + Opus advisor | Low | 41.2% |

| Sonnet solo | Medium | ~56% (est.) |

| Sonnet + Opus advisor | Medium-Low | Improved |

| Opus solo | Highest | Highest |

The underlying economics are clear. At a price point of $3/$15 per million tokens, Sonnet 4.6 competently handles over 90% of standard coding tasks without compromising quality. The advisor configuration makes that dynamic even more financially defensible for enterprise-scale workloads.

You get the refined judgment of Opus without enduring Opus pricing for the entire execution run.

FAQ

Q. What is the Claude Advisor Strategy?

It is an advanced multi-model architecture where Claude Opus 4.6 acts as a strategic advisor to a faster executor model, such as Sonnet or Haiku. The executor manages the task end-to-end and consults Opus only at critical decision points, ensuring high intelligence at a low average cost.

Q. What is Claude Advisor in the API?

Claude Advisor refers to the advisor_20260301 server-side tool, currently available in beta on the Claude Platform. It seamlessly routes complex decision-making moments to Opus within a single API call, completely bypassing the need for custom orchestration code.

Q. How do I use Opus as an advisor in Claude?

You simply add the beta header anthropic-beta: advisor-tool-2026-03-01, declare the advisor_20260301 tool with "model": "claude-opus-4-6" in your Messages API request, and set a max_uses limit. The executor model handles the routing and handoff automatically.

Q. Can I connect Opus with both Sonnet and Haiku?

Yes. The advisor tool is fully compatible with either Sonnet 4.6 or Haiku 4.5 functioning as the executor. Haiku yields the most dramatic cost savings, whereas Sonnet delivers the highest overall performance in this configuration.

Q. How is the advisor billed?

Advisor tokens are billed at Opus rates, and executor tokens are billed at Sonnet or Haiku rates. Because the advisor only generates short, targeted planning outputs (usually 400–700 tokens), the blended operational cost is drastically lower than using Opus for the entire task.

Q. Is the advisor tool available to all Claude API users?

It is currently in beta on the Claude Platform. Developers can access it immediately by including the appropriate beta header in their API requests.

Q. Does the advisor produce user-facing output?

No. The advisor exclusively provides internal, strategic guidance to the executor model. All final, user-facing output is generated by the executor model (Sonnet or Haiku).

Conclusion

The Claude Advisor Strategy represents a foundational evolution in how scalable AI systems are architected.

Developers are no longer forced to make a painful compromise between top-tier intelligence and sustainable unit economics. You can now have both: Opus-level reasoning applied exactly when it matters, with Sonnet or Haiku aggressively managing the overhead.

For enterprise teams building at scale, the metrics are undeniable. You achieve better benchmark scores, lower per-task costs, and zero custom orchestration latency. Early adopters across legal, software development, and customer support sectors are already reporting massive efficiency gains.

Whether you are engineering an autonomous coding agent, a complex document intelligence pipeline, or a global customer service network, the Claude Advisor Strategy provides a principled, highly cost-effective path to frontier AI performance.

The single-line API change is ready. The only remaining question is how quickly your engineering team will deploy it.