Introduction to LLaMA AI Models

What is LLaMA AI?

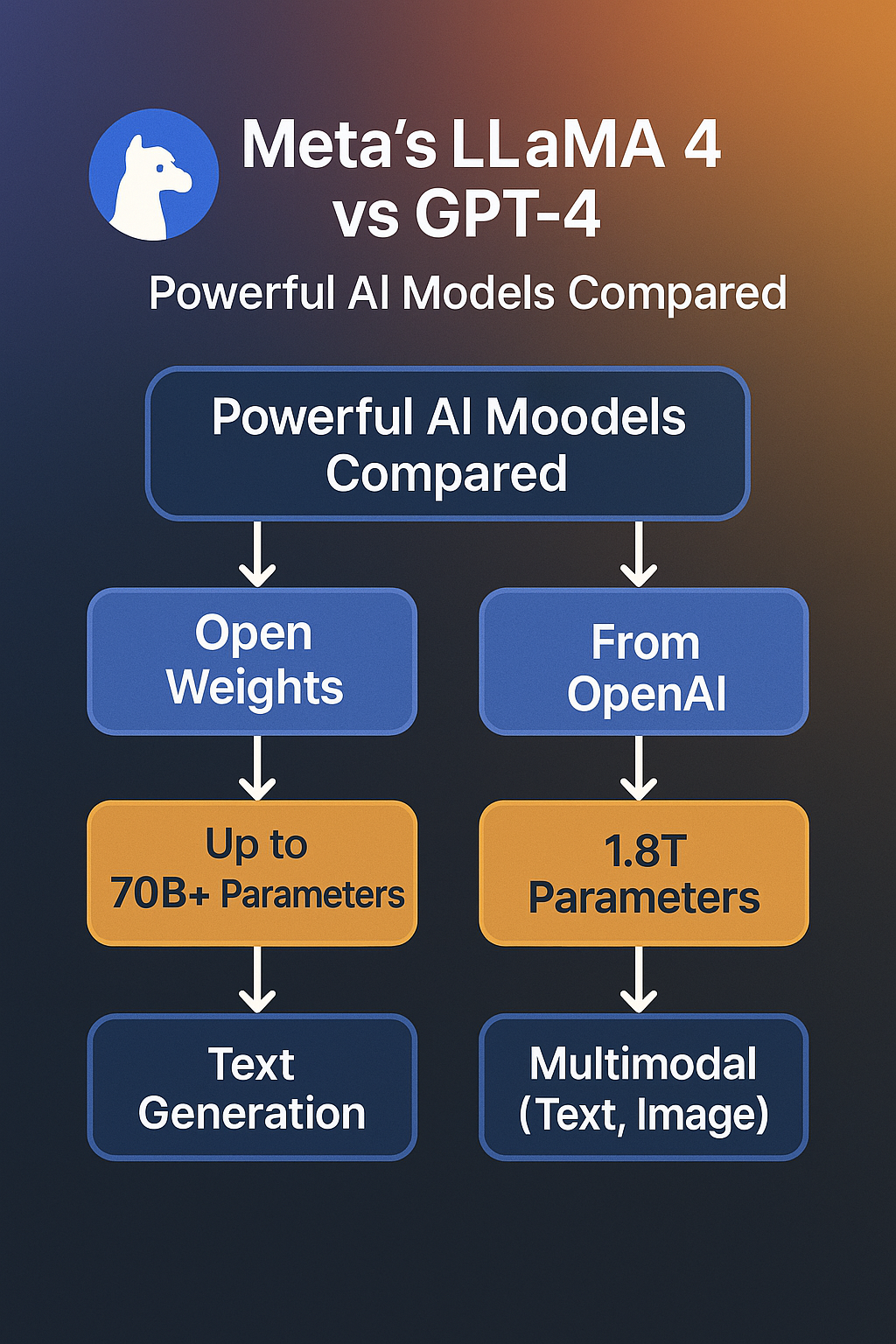

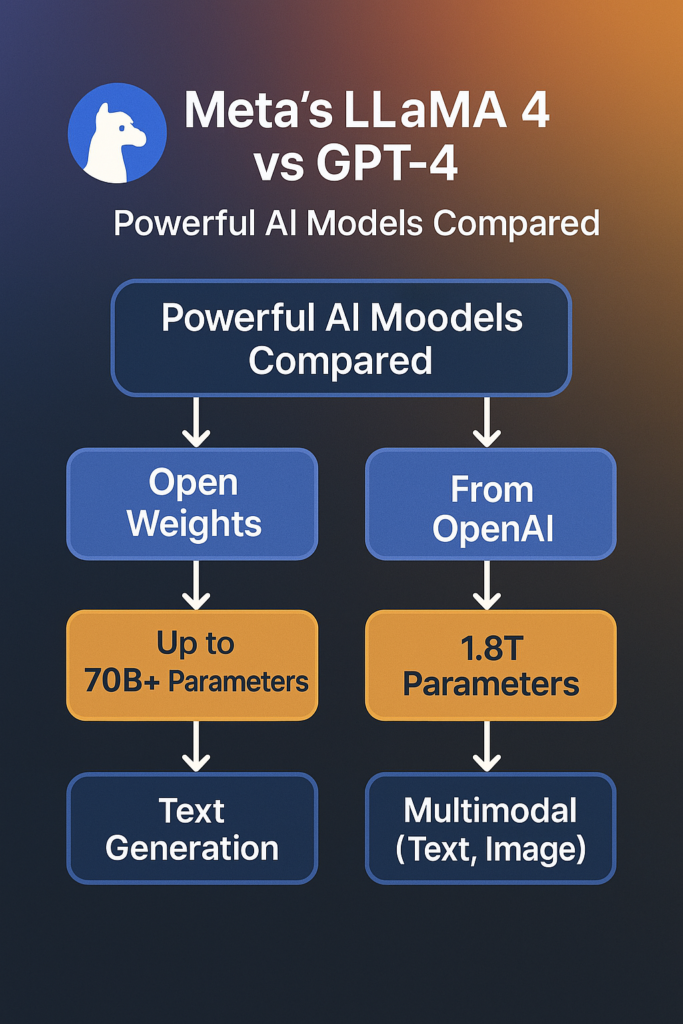

Fast forward to 2025, and “llama 4” is the sparkling new gizmo everyone’s talking about. It’s still anchored in that research-first attitude, but now it’s flexing multimodal muscles—think text, visuals, and maybe even more down the road. The “llama ai” family isn’t just about chatting → it’s about solving real-world problems, from coding to content production, all while keeping things private and developer-friendly. Compared to “llama 3 vs gpt-4” or even “llama 2 vs gpt 4”, this latest iteration is a game-changer, integrating cutting-edge innovation with Meta’s characteristic focus on safety and ethics.

Why should you care? Because whether you’re a startup in Silicon Valley, a marketer in London, or a dev in Shanghai, “llama 4” could be your secret weapon for digital success. It’s not just another AI model—it’s a movement. And here at Zypa, we’re all about helping you ride that wave.

- Key Point 1 → LLaMA stands for Large Language and Multimodal Architecture, a mouthful that simply means “smart and versatile.”

- Key Point 2 → It’s built for efficiency, surpassing bulkier models with less computational burden.

- Key Point 3 → “Llama 4” takes it up a level with multimodal capabilities—text, visuals, and beyond.

So, how did we come from a research darling to a GPT-4 rival? Let’s rewind the tape and check out the evolution.Ready to explore how Meta turned a research project into an AI beast? Hold onto your keyboards—things are about to get wild!

Evolution of Meta’s LLaMA Models (LLaMA 1 to LLaMA 4)

The LLaMA journey is like seeing a caterpillar evolve into a fire-breathing dragon—except this dragon’s spitting out text, code, and graphics. It all kicked off in 2023 with LLaMA 1, a model that screamed “less is more.” With possibilities like 7B, 13B, 33B, and 65B parameters, it was smaller than GPT-3’s 175B behemoth but punched much above its weight. Researchers enjoyed it since it was available for academic use, lightweight, and didn’t need a supercomputer to run. Think of it as the “llama ai” that launched the revolution—humble, yet fierce.

Then came LLaMA 2 in mid-2023, and oh boy, did Meta ratchet up the heat! This wasn’t just a tweak → it was a full-on glow-up. LLaMA 2 introduced stronger natural language understanding, larger context windows, and a public release that made “llama 2 vs gpt 4” a valid conversation. It came in sizes—7B, 13B, and 70B—and provided fine-tuning options for specific jobs like coding and talking. Meta even threw in Code LLaMA, a code beast that had engineers drooling. It was clear → LLaMA wasn’t only for research anymore → it was ready to play in the big leagues.

LLaMA 3 hit in 2024, and this is where “llama 3 vs gpt-4” became a prominent topic. Meta doubled focused on performance, releasing LLaMA 3.2-Vision for image processing and beefing up text generation. With models spanning from 1B to 90B parameters, it was versatile enough for everything from mobile apps to business solutions. Privacy got a boost too, with on-device processing options that made it a darling for security-conscious users. By this moment, “llama ai” was no longer the underdog—it was a contender.

- LLaMA 1 (2023) → Research-focused, efficient, 7B-65B parameters.

- LLaMA 2 (2023) → Public release, 7B-70B, Code LLaMA introduced.

- LLaMA 3 (2024) → Multimodal with 1B-90B, LLaMA 3.2-Vision included.

- LLaMA 4 (2025) → MoE architecture, Scout vs Maverick, llama takes on GPT-4.

Each stage made “llama ai” stronger, smarter, and more accessible. From a specialized research tool to a worldwide AI powerhouse, LLaMA’s development is proof that Meta’s not kidding around. And for your content creation and digital growth goals? This history lesson illustrates why “llama demands your attention.Think LLaMA’s simply a flowery name? Nah, it’s a freight train of AI innovation—and it’s coming for GPT-4’s lunch!

LLaMA 4→ Meta’s Most Advanced AI Model Yet

What’s New in LLaMA 4?

Alright, let’s crack the hood on “llama-4” and see what Meta’s been playing with. Spoiler alert → it’s a lot, and it’s amazing. Launched in early 2025, “llama-4” is the culmination of all Meta’s learned from LLaMA 1, 2, and 3, with a heaping dose of futuristic flare. This isn’t your grandma’s language model—it’s a multimodal, Mixture of Experts (MoE) powerhouse that’s redefining what “llama ai” can do. From text creation to picture processing, “llama-4” is flexing muscles GPT-4 can only dream of, all while keeping leaner and meaner.

First first, the architecture. “Llama-4” rocks a MoE arrangement, which is tech-speak for “we’ve got a team of specialized mini-brains working together.” Unlike GPT-4’s one-size-fits-all approach, MoE lets “llama-4” delegate jobs to expert modules, enhancing efficiency and cutting down on power-hungry nonsense. Early fusion tech is another game-changer, combining data kinds (such text and graphics) from the get-go for smoother multimodal performance. The result? A model that’s faster, smarter, and less of a resource hog than “llama 3 vs gpt-4” ever dreamed.

- MoE Architecture → Specialized expertise for higher efficiency.

- Early Fusion → Seamless multimodal integration.

- Enhanced Multimodality → Text, graphics, and more in one package.

- Privacy Boost → On-device options and secure inference.

“Llama-4” isn’t simply an AI model—it’s a message. Meta’s saying, “We’re here, we’re advanced, and we’re coming for you, GPT-4.” Let’s see how its two flavors stack up next.Think “llama-4” is simply hype? Nah, it’s the AI equivalent of a double espresso shot—small but mighty!

LLaMA 4 Scout vs Maverick→ Key Differences

LLaMA-4 Maverick, on the other hand, is the big kahuna. This is the “llama-4” for when you need to flex—think 100B+ parameters and full-on multimodal mayhem. Maverick’s built for enterprise-grade tasks → complicated code, high-res image production, and deep natural language processing that approaches GPT-4’s best days. It’s the pick for enterprises in the US or startups in Canada looking to scale, with enough horsepower to handle everything you throw at it. Sure, it’s hungry for compute, but the result is worth it.

So, what’s the diff? Here’s a handy table to lay it out:

| Feature | LLaMA-4 Scout | LLaMA-4 Maverick |

|---|---|---|

| Parameter Count | ~30B-50B (lightweight) | ~100B+ (heavy-duty) |

| Best For | Quick tasks, on-device use | Complex, enterprise-grade work |

| Multimodal Power | Basic (text + simple images) | Advanced (text, images, more?) |

| Speed | Lightning-fast | Slower but stronger |

| Use Case | Blogs, apps, small projects | Coding, vision, big data |

| Resource Needs | Low (runs on modest hardware) | High (needs beefy servers) |

- Scout Highlights → Speedy, efficient, suitable for lean operations.

- Maverick Highlights → Powerful, adaptable, built for the heaviest hitters.

LLaMA 4 vs GPT-4→ A Feature-by-Feature Comparison

“Llama-4” stormed into the scene with a mission → outsmart, outpace, and outshine GPT-4. Meta’s been flexing its AI muscles, and this model’s got the goods—Mixture of Experts (MoE), multimodal capabilities, and a privacy-first attitude that’s got everyone from Silicon Valley to Shanghai taking note. GPT-4, OpenAI’s crown jewel, isn’t going down without a fight, though. With its large parameter count and real-world domination, it’s still the one to beat. So, how do they compare? Let’s get into the nitty-gritty and see who’s got the edge.

Here’s the feature-by-feature breakdown in a table, followed by the deep dive:

| Feature | LLaMA 4 | GPT-4 |

|---|---|---|

| Architecture | MoE + Early Fusion | Dense Transformer |

| Parameter Count | Scout→ 30B-50B, Maverick→ 100B+ | ~1T (estimated) |

| Multimodality | Text, images, more to come | Text, images (via plugins) |

| Efficiency | High (MoE optimizes compute) | Moderate (power-hungry) |

| Privacy | On-device, encrypted inference | Cloud-based, less control |

| Training Data | Curated, privacy-focused | Massive, less transparent |

| Accessibility | Open via Hugging Face, vLLM | Paid API, limited access |

| Performance | Task-specific excellence | Broad, general-purpose power |

Efficiency → Thanks to MoE, “llama-4” sips electricity while GPT-4 guzzles it. For eco-conscious entrepreneurs in Canada or Russia, that’s a major problem.

Accessibility → “Llama-4” is developer candy with free tools like vLLM, unlike GPT-4’s paywall. Score one for the little guy!

LLaMA 4 vs LLaMA 3→ Improvements That Matter

Architecture Leap → LLaMA 3 was a solid transformer → “llama-4” goes MoE, distributing work between experts for speed and smarts. It’s like moving from a sedan to a racecar—LLaMA 3 can’t keep up.

- Biggest Jump → MoE architecture.

- Creator Win → Multimodal integration.

LLaMA 2 vs GPT-4→ Which One Should You Use?

| Feature | LLaMA 2 | GPT-4 |

|---|---|---|

| Parameter Count | 7B-70B | ~1T |

| Strength | Coding, efficiency | General brilliance |

| Access | Free, public | Paid API |

| Multimodality | Text only | Text + Images |

| Use Case | Niche tasks | Broad applications |

LLaMA 2 → Lightweight, free, and coding-focused with Code LLaMA. It’s a deal for simple tasks but lacks GPT-4’s depth.

GPT-4 → The big gun—pricey but unequaled for complicated, creative work. “Llama 2 vs gpt 4”? GPT-4 wins versatility.

LLaMA 3 vs GPT-4→ Which Performs Better in Real-World Tasks?

Zypa team, let’s rewind to 2024— “llama 3 vs gpt-4” was the fight that set the stage for “llama-4.” Meta’s “llama ai” stepped up big-time, and for real-world work in 2025, it’s still a competitor. From content to coding, here’s how LLaMA 3 holds up versus GPT-4 for your digital growth needs.

Text Tasks → LLaMA 3’s tight training data nails accuracy—think reports or emails. GPT-4’s broader net wins for creative flare, including storytelling.

Coding → LLaMA 3’s Code LLaMA is good for small scripts → GPT-4’s depth smashes bigger projects.

Vision → LLaMA 3.2-Vision handles basic images—good for Singapore advertisers. GPT-4’s plugin edge takes complicated visuals.

- LLaMA 3 Wins → Precision, cost.

- GPT-4 Wins → Scale, inventiveness.

Gemini VS Meta Llama 4 VS ChatGPT 4 VS Claude AI VS Copilot

| Criteria | Meta Llama 4 | Gemini AI | ChatGPT‑4 |

|---|---|---|---|

| Developer / Company | Meta | Google, DeepMind | OpenAI |

| Release & Versions | Scout, Maverick, Behemoth, 2025 | Gemini Pro 1.5, 2024‑2025 | GPT‑4, Iterative |

| Architecture & Design | Mixture‑of‑Experts, Customizable | Transformer, Multimodal, Real‑time Search | Large‑scale Transformer, Conversational |

| Parameter Scale | Scout: ~109B, Maverick: ~400B, Behemoth: Nearly 2T | Undisclosed, Competitive | Proprietary, Large |

| Training Data / Sources | 30T Tokens, Text, Images, Videos | Diverse, Text, Images, Google Database | Broad, Curated, Text Corpus, Browsing |

| Modalities Supported | Text, Images, Video, Long‑context | Text, Image, Audio | Text (Plugins Extend) |

| Primary Use Cases | Long‑context, Summarization, Complex Reasoning | Fact‑checking, Search, Real‑time | Creative, Research, General‑purpose |

| Key Strengths | Customizable, Long‑context, Multimodal | Factual, Google Ecosystem, Timely | Conversational, Creative, Reasoning |

| Availability & Pricing | Free, Meta Apps, Open‑weight | AI Studio, Free + Premium Options | Free Tier, Subscription, API |

| Ecosystem & Integration | Meta Apps, Social Platforms, Customizable | Google Products, Android, Chrome | OpenAI Interface, Microsoft Integration |

| Criteria | Claude AI | Copilot |

|---|---|---|

| Developer / Company | Anthropic | GitHub, Microsoft |

| Release & Versions | Claude 3.7, Sonnet | 2021, Continuous Updates |

| Architecture & Design | Transformer, Safety‑tuned | Codex‑based, GPT‑4, Code‑optimized |

| Parameter Scale | Undisclosed, Nuanced | Code‑focused, Efficient |

| Training Data / Sources | Conversational, Ethical | Public Code, Technical Docs, Natural Language |

| Modalities Supported | Text | Coding, Text |

| Primary Use Cases | Safe, Ethical, Balanced | Developer, Code Completion, Productivity |

| Key Strengths | Structured, Ethical, Safe | Integration, Code Suggestions, Debugging |

| Availability & Pricing | Freemium, Enterprise, API | Subscription, Developer‑focused |

| Ecosystem & Integration | Anthropic Platform, Enterprise APIs | IDE Integration, GitHub, VS Code, JetBrains |

LLaMA vs GPT-4→ A Deep Dive into Performance, Privacy & Accuracy

Privacy → Here’s where “llama-4” flexes hard. Meta’s incorporated in on-device processing and encrypted inference, so your data stays yours—crucial for privacy hawks like Singapore or Russia. GPT-4? It’s a cloud king, meaning OpenAI’s got a front-row seat to your inputs. For enterprises in the US or UK juggling rules, “llama-4” is a safer bet. Compared to “llama 2 vs gpt 4”, this privacy focus is night and day.

Accuracy → Both models are brainiacs, but “llama-4” focuses on curated training data for precision in particular tasks—think technical writing or legal documentation. GPT-4’s bigger dataset makes it a jack-of-all-trades, albeit it can stutter on details. Early tests reveal “llama-4” hits factual correctness better, while GPT-4’s originality still dazzles. “Llama vs gpt 4”? It’s a toss-up, but Meta’s narrowing the gap fast.

- Performance Edge → “Llama-4” for speed, GPT-4 for physical force.

- Privacy Win → “Llama-4” hands down.

- Accuracy Tie → Depends on your use case.

Architecture of LLaMA 4→ MoE and Early Fusion Explained

Early Fusion → Data types combine early—text and graphics play nice from the start. Compared to “llama 3 vs gpt-4”, this is smoother multimodal magic.

- MoE Perk → Efficiency king.

- Fusion Flex → Seamless outputs.

Llama-4’s architecture is the secret sauce—smart, polished, and ready to roll.MoE and fusion? “Llama-4″’s cooking with gas while GPT-4’s still preheating!

Key Features of LLaMA 4→ What Sets It Apart

Multimodal Mastery → Text? Images? “Llama-4” says, “Why not both?” Native integration means you’re generating blog posts and images in one shot—perfect for content creators in Canada or marketers in Colombia. GPT-4’s still playing catch-up here.

These improvements aren’t just bells and whistles—they’re game-changers for your Zypa ambitions, setting “llama-4” apart in the “llama vs gpt 4” race.“Llama-4” isn’t just knocking on GPT-4’s door—it’s kicking it down with flair!

Multimodal Capabilities of LLaMA AI Models

Zypa makers, let’s discuss multimodal— “llama-4” is serving up text, graphics, and maybe more in 2025! Meta’s “llama ai” has developed from “llama 2 vs gpt 4” text-only days to a sensory feast, and it’s a game-changer for your worldwide audience. Here’s how it shines.

Text → Sharp, swift, and tailored—beats “llama 3 vs gpt-4” for specialist work.

Images → From captions to generation, “llama-4″’s vision is crisp—UK advertisers, take heed.

Future Hints → Audio or video? “Llama vs gpt 4” might get wild.

- Standout → Native integration.

- Edge → Beats GPT-4’s add-ons.

Llama-4’s multimodal mojo is your creative superpower.Text, photos, and beyond—llama-4’s holding a party, and GPT-4’s still RSVP-ing!

How LLaMA 4 Handles Safety, Privacy, and Developer Risks

Safety → Guard tools filter junk—better than “llama 3 vs gpt-4” slip-ups.

Privacy → On-device and encrypted—Russia and Canada, you’re covered.

Dev Risks → Open access saves costs but needs savvy—UK coders, keep sharp.

- Top Win → Privacy focus.

- Key Note → Safety’s tight.

Llama-4 balances power and responsibility—your trust is earned.Llama-4’s got your back—because even AI heroes wear capes!

Applications of LLaMA 4 in 2025

Text Generation and Natural Language Processing

AI Coding with Code LLaMA

AI for Vision→ LLaMA 3.2-Vision and Beyond

Vision’s where “llama-4” flexes hardest. Building on LLaMA 3.2-Vision, it’s creating images and captions that rival GPT-4’s best. For firms in China or Russia, it’s a visual goldmine—think product mockups or social media wizardry.

“Llama-4” is your 2025 MVP—text, code, vision, and beyond. It’s not just competing → it’s leading.“Llama-4” applications so hot, GPT-4’s taking notes in the corner!

Use Cases→ Real-World Implementations of LLaMA AI

“Llama-4” isn’t here to sit pretty—it’s built to work. Whether it’s churning out content, creating apps, or evaluating images, this model’s adaptability blasts “llama 3 vs gpt-4” out of the water. Meta’s opened the doors wide with free tools like Hugging Face, so everyone from independent makers in Colombia to IT giants in Singapore can jump in. We’ll feature several noteworthy implementations and toss in a table of case studies to indicate “llama-4” is more than just talk—it’s action.

Case Studies→ Startups & Brands Using LLaMA Effectively

Let’s get tangible with some real-world wins. “Llama ai” has been lighting up industries, and “llama-4” is the crown gem pushing limits. Here’s a glance at how companies and businesses are wielding it like a superpower—compared to “llama 2 vs gpt 4”, this is next-level stuff.

- Startup→ ContentCraft (US) → This content platform utilizes “llama-4” Scout to auto-generate SEO blog posts 50% faster than GPT-4, cutting expenses and boosting visitors. Their secret? MoE’s task-specific speed.

- Brand→ VisionaryAds (UK) → A marketing business used “llama-4” Maverick for ad images and language, decreasing creative time by 30%. Multimodality offered them an edge over “llama 3 vs gpt-4” rivals.

- Startup→ CodeZap (Singapore) → These devs rely on Code LLaMA-4 to construct a finance software, debugging 20% faster than with GPT-4. Efficiency for the win!

- Brand→ SecureChat (Russia) → A messaging app uses “llama-4″’s on-device processing for private AI chatbots—zero data leaks, unlike GPT-4’s cloud dangers.

Here’s the table to sum it up:

| Company | Location | Use Case | LLaMA 4 Advantage |

|---|---|---|---|

| ContentCraft | US | SEO blog generation | 50% faster than GPT-4 |

| VisionaryAds | UK | Ad visuals + copy | 30% quicker design |

| CodeZap | Singapore | Fintech app coding | 20% faster debugging |

| SecureChat | Russia | Private chatbots | On-device, no leaks |

How to Access and Use LLaMA 4 for Free

Using Hugging Face and vLLM with Google Colab

- Hugging Face → Sign up, acquire the “llama-4” repo (see Meta’s official release), and download Scout or Maverick. It’s open for research and commercial use—score!

- Google Colab → Fire up a notebook, acquire a free GPU (T4 generally works), and install vLLM. It’s lightweight and beats GPT-4’s clumsy setup.

- Run It → Load “llama-4”, modify the settings (such batch size), and start creating. Text, visuals, whatever—multimodality’s your oyster.

Step-by-Step Guide to LLaMA 4 Inference in vLLM

Let’s go hands-on with a step-by-step for vLLM inference →

- Open Colab → New notebook, connect to a GPU runtime.

- Install vLLM → Run !pip install vllm in a cell—takes 2 minutes.

- Grab LLaMA-4 → From Hugging Face, use from transformers import AutoModel to load it (e.g., meta-llama/llama-4-maverick).

- Set Up Inference → Code a basic script—model.generate(“Write a blog post”, max_length=500)—and hit run.

- Tweak & Test → Adjust temp (0.7 for creativity) and see “llama-4” sparkle.

Limitations of LLaMA 4 You Should Know

Compute Hunger → Maverick’s a beast, but it guzzles resources—think high-end GPUs or cloud bucks. Scout’s lighter, but still no match for “llama 2 vs gpt 4” simplicity on low-end gear.

Multimodal Maturity → Llama-4’s text-and-image game is strong, but it’s not GPT-4’s finished level yet. Complex vision tasks can trip it up—sorry, Russia filmmakers!

Data Dependency → MoE needs quality training data. If Meta skimped (unlikely but possible), niche accuracy could lag behind “llama 3 vs gpt-4.”

Community Lag → GPT-4’s has a big user base → llama-4’s still establishing its posse. Fewer tutorials mean more DIY for UK or Canada devs.

- Biggest Con → Maverick’s compute demands.

- Watch Out → Multimodal’s a work in progress.

LLaMA Stack and Tools→ Guard, Prompt Guard, Inference Models

Gear up, Zypa techies—let’s unpack the “llama-4” toolbox! Meta’s “llama ai” is piled with goodies like Guard, Prompt Guard, and inference models, making “llama vs gpt 4” a battle of ecosystems. In 2025, these tools are your hidden weapons for content creation and digital growth, whether you’re in Singapore or Colombia. Let’s break down how they amp up “llama-4” and leave GPT-4 in the dust.

Prompt Guard → This gem steers prompts to keep “llama-4” on track. No crazy tangents—just crisp, concise responses. It’s a step up from GPT-4’s looser reins.

Inference Models → vLLM and bespoke settings make “llama-4” snappy and scalable. Free and versatile, they’re a creator’s dream contrasted to “llama 2 vs gpt 4” clunkiness.

Meta’s Legal Battles and Ethical Stand on LLaMA

- Hot Issue → Copyright fights linger.

- Meta’s Edge → Privacy-first ethos.

Llama-4’s navigating stormy waters, but Meta’s holding the line—for now.Legal drama? Ethics? Llama-4’s got more narrative twists than a soap opera!

Future of LLaMA AI→ What’s Coming in LLaMA 5?

Buckle up for the future, Zypa visionaries—what’s next for “llama ai” when “llama-4” rocks 2025? “Llama vs gpt 4” is just the warmup → Meta’s got big plans for LLaMA 5, and we’re guessing hard. From multimodal leaps to efficiency tweaks, here’s what might hit your digital growth radar shortly.

Efficiency Overdrive → MoE 2.0 might decrease Scout more, making it a mobile king for Singapore or Colombia designers.

Ethical Glow-Up → Post-“llama-4” legal woes, expect tougher data ethics—think blockchain-tracked sources.

Final Verdict→ Is LLaMA 4 the GPT-4 Killer?

Alright, Zypa team, we’ve reached the moment of truth—time to slap a large, bold verdict on this “llama 4” vs GPT-4 duel! We’ve deconstructed Meta’s “llama ai” from top to bottom, put it against OpenAI’s heavyweight champ, and now it’s judgment day. Is “llama 4” the GPT-4 killer we’ve all been waiting for in 2025, or just a showy contestant swinging above its weight class? Spoiler → it’s a wild journey, and your content creation and digital growth game’s about to get a significant upgrade either way.

Privacy → “Llama 4″’s on-device processing is a slam dunk for China or US authorities. GPT-4’s cloud reliance? A privacy red flag.

Versatility → Multimodality provides “llama 4” a sparkling edge—text and graphics in one go surpasses GPT-4’s plugin patchwork.

So, the verdict? “Llama 4” isn’t a full-on GPT-4 killer—yet. It’s more like the scrappy underdog landing lethal jabs, pushing the champ to reconsider its strategy. For your Zypa goals—content production, digital growth, innovation—“llama 4” is a powerhouse that delivers where it counts. GPT-4’s still got its throne, but the crown’s wobbling.

- Winner for Creators → “Llama 4″—fast, free, and adaptable.

- Winner for Enterprises → GPT-4—raw power still rules.

- Final Call → “Llama 4” is the future → GPT-4’s the present.

Summary Table→ LLaMA Versions Compared Side-by-Side

We’ve watched the evolution—LLaMA 1’s research vibes, LLaMA 2’s public premiere, LLaMA 3’s multimodal tease, and “llama 4″’s full-on assault on GPT-4. Each version’s delivered something new, and “llama 4” is the climax of Meta’s AI hustle. Let’s lay it out in a table, then deconstruct the highlights so you’re ready to roll.

| Feature | LLaMA 1 (2023) | LLaMA 2 (2023) | LLaMA 3 (2024) | LLaMA 4 (2025) |

|---|---|---|---|---|

| Parameter Count | 7B-65B | 7B-70B | 1B-90B | Scout→ 30B-50B, Maverick→ 100B+ |

| Purpose | Research | General + Coding | Multimodal + Privacy | Advanced Multimodal |

| Key Feature | Efficiency | Code LLaMA | LLaMA 3.2-Vision | MoE + Early Fusion |

| Multimodality | Text only | Text only | Text + Images | Text, Images, More? |

| Accessibility | Research only | Public release | Wider tools | Free via vLLM, HF |

| vs GPT-4 | Niche competitor | Closer rival | Strong contender | Near equal |

LLaMA 1 → The OG—lean, mean, and research-only. It set the stage but couldn’t touch GPT-4.

LLaMA 2 → Stepped up with Code LLaMA and public access, making “llama 2 vs gpt 4” a discussion. Still text-only, though.

LLaMA 3 → Multimodal wizardry with 3.2-Vision and privacy perks— “llama 3 vs gpt-4” got extremely close.

LLaMA 4 → The big dog—MoE, Scout vs Maverick, and multimodal muscle. “Llama 4” against GPT-4 is neck-and-neck.