On March 31, 2026, Anthropic accidentally shipped the entire source code of Claude Code – 512,000 lines across 1,906 TypeScript files – to the public npm registry. Claude Leak due to a single misconfigured debug file. Anthropic confirmed it was human error, not a breach. In this post, we’ll cover exactly what was exposed, what competitors now know, and the security risks that followed.

What Occurred: The Technical Failure

Claude Code v2.1.88 from Anthropic was released to npm along with a cli.js.map source map file that was never supposed to be part of a production release.

The 57–59.8 MB.map file led directly to a ZIP archive stored on Anthropic's own Cloudflare R2 storage bucket. The complete, unobfuscated TypeScript source could be downloaded and decompressed by anyone who discovered the URL.

Chaofan Shou, a security researcher, was the first to notice it and shared it on X. Nearly 10 million people viewed the finding. The code was mirrored on GitHub and forked more than 41,500 times in a matter of hours.

Root cause: Source maps are automatically generated by Bun, the JavaScript runtime Anthropic. Nobody left *.map out of package.json's files field or .npmignore. Everything was exposed by a single incorrectly set build file.

This incident, widely referred to as the Claude leak and Anthropic source code leak, highlights the Claude Code leak 2026.

Claude Feature that Beats Designers: What Is Claude Design: Features, Uses & Chat Integration

The contents of the code that was leaked

The source code that was leaked contained:

- LLM API call engine: token counting, retry logic, tool-call loops, and streaming responses

- Claude Code hooks, MCP server integrations, and environment variable handling at the permission and execution layer

- Memory and state systems, including autonomous daemons, background agents, and persistent memory

- System prompts are internal instructions from Claude Code that are integrated right into the CLI.

- Anti-distillation mechanisms: logic intended to tamper with rival training data by inserting fictitious tool definitions into API traffic

Yes, someone at Anthropic was enjoying themselves with these 187 unique spinning verb sentences.

User information, API credentials, and AI model weights were not disclosed in the hack.

Public Access to Hidden Features

44 feature flags-fully developed features hidden behind disabled switches in the production build - were made public by the leak. Axios claims that these consist of:

- autoDream is a memory consolidation daemon that merges observations and eliminates logical conflicts while the user is not using it.

- KAIROS always-on autonomous background agent mode - has been mentioned more than 150 times.

- Use a phone or another browser to control Claude Code remotely.

- Session review: To enhance performance going on, Claude examines its own recent sessions.

- The buddy/companion system, a Tamagotchi-style pet feature with sprite animations, is scheduled to launch on April 1–7, 2026.

Vaporware is not what these are. The code is compiled. Because the full roadmap has already been developed, Anthropic is delivering features gradually.

Build Agents In Using Claude Now: What is Claude Console: How to BUILD Claude Managed Agents

Subsequent Security Risks

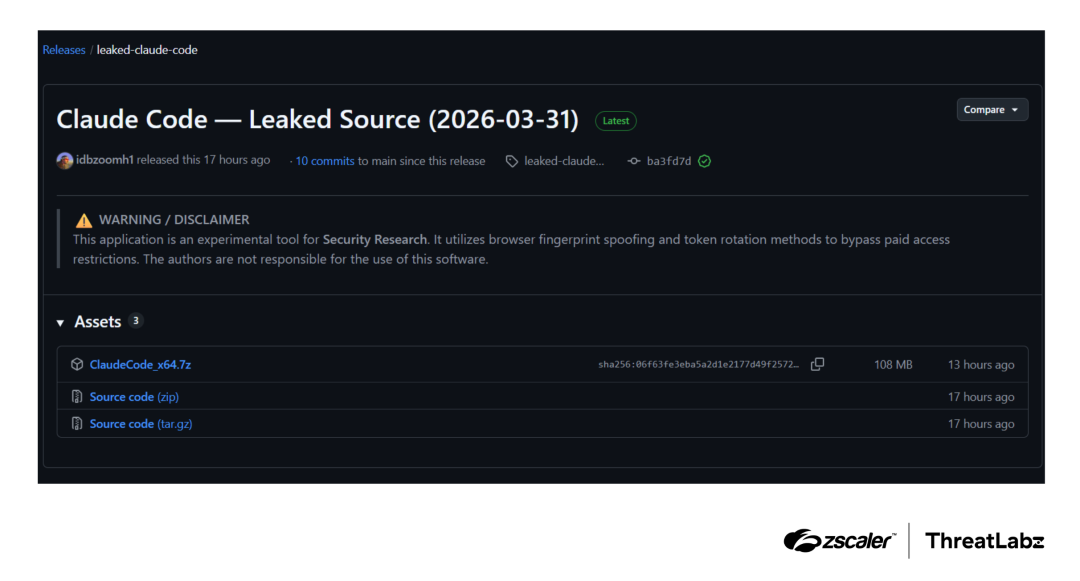

A different supply-chain attack on the axios npm package, which is a direct dependency of Claude Code, occurred concurrently with the leak. Malicious versions of Axios (1.14.1 and 0.30.4) with a Remote Access Trojan (RAT) were released on March 31 between 00:21 and 03:29 UTC.

If you used npm to install or update Claude Code during that time, look for the following in your lockfiles (package-lock.json, yarn.lock, bun.lockb):

- 0.30.4 or axios 1.14.1

- The plain-crypto-js dependency

Extra dangers resulting from exposure to the source:

- Attackers can now create carefully constructed malicious repositories that discreetly run shell operations because they are aware of the specific orchestration mechanism for Hooks and MCP servers.

- With full source visibility, it is now much simpler to weaponize pre-existing CVEs (CVE-2025-59536, CVE-2026-21852).

- In order to propagate the malware Vidar and GhostSocks, threat actors are actively disseminating phony "leaked Claude Code" builds.

Any GitHub repository claiming to house the disclosed source should not be downloaded, forked, or executed. Use only Anthropic channels that are authorized.

This Claude data leak combined with the Anthropic Human Error Leak has raised serious concerns in the developer community.

The Official Anthropic Reaction

Anthropic directly verified the occurrence:

"Some internal source code was included in a Claude Code release earlier today. There was no exposure or involvement of private client information or credentials. This was not a security compromise, but rather a release packaging problem brought on by human error. We're putting policies in place to make sure this doesn't happen again."

According to Fortune, details of a future AI model were also discovered in a publicly available data cache a few days prior, making this Anthropic's second significant data mishap in less than a week. The business claimed a yearly revenue run-rate of $19 billion as of March 2026 and is currently getting ready for an IPO.

ChatGPT Competeting with Claude Silently: How to Make ChatGPT Agents: Create, Edit & Automate Tasks

What Rivals Acquired

By early 2026, Claude Code's annual revenue (ARR) had reached $2.5 billion, with 80% coming from enterprise. In essence, the compromised source serves as a free engineering blueprint for all rival products.

The Competitive Impact of What Was Exposed

- Management of context entropy

- A blueprint for enduring agent dependability

- Model of skeptical memory

- A copy-paste strategy to stop hallucination drift

- Orchestration of MCP + hooks

- Complete architecture for agent coordination

- Anti-distillation reasoning

- discloses IP protection tactics

- 20 features that are not yet shipped

- Intelligence on competitor roadmaps

Anthropic's solution to production-grade AI agent reliability is now directly referenced by competitors like Cursor, OpenAI's Codex, and Google's coding tools.

FAQ

Q: Did the Claude Code leak reveal user data?

No, Anthropic verified that no credentials, model weights, or customer information were present. The only source code that was visible was internal TypeScript.

Q: What is a source map file, and why was it risky?

For debugging purposes, a .map file connects minified production code to the original readable source. The entire original codebase is made public when it is included in a public npm package. It wasn't a hack; it was a build pipeline misconfiguration.

Q: Is it permissible to use or share the Claude Code source that was leaked?

No, proprietary code that has been leaked is not open source. It is still the intellectual property of Anthropic. It is legally risky to distribute or manufacture goods from it. Takedown notices have already been sent by Anthropic.

Q: Was the Claude Code leak connected to the Axios supply chain attack?

Although the two accidents happened at the same time, they don't seem to be connected. Nevertheless, developers who modified Claude Code on March 31 faced increased risk because both had an impact on the same tool on the same day.

Q: Has the leak been fixed by Anthropic?

Indeed, Anthropic removed the impacted version of npm and is putting build pipeline constraints in place to stop source maps from being included in upcoming production releases.

Conclusion

One incorrectly configured line in a build file led to the 2026 Claude data leak, which gave rivals a full engineering reference for a $2.5 billion ARR product. Despite Anthropic's claim that no user data was compromised, the disclosure of 44 unreleased features, internal system prompts, and agent architecture represents a significant strategic loss. The malware spreading in phony mirrors poses a greater immediate threat to developers than the disclosure itself. Remain on the official channels.

Discover comprehensive coverage of the newest developments in product intelligence, security, and AI tools on ZYPA.